By Massimiliano Moruzzi, founder and CEO of Xaba.ai

Walk into any modern manufacturing facility and you’ll see the same tension playing out on the floor: incredibly capable machines, hemmed in by the assumption that everything will go exactly as planned. For decades, that assumption held well enough, but today it’s becoming a liability.

Manufacturers are under mounting pressure to produce faster, absorb more variability, and cut downtime, all while a new wave of AI promises to deliver “intelligence” without the complexity of traditional programming.

The pitch sounds compelling, but most of this AI is built on the same foundation as your company’s chatbot, and you cannot run a factory on prompts.

The limits of prompt-based AI in physical systems

Prompt-based AI, the technology behind conversational tools and digital copilots, is extraordinarily good at processing language and generating responses.

In digital environments, where a wrong answer is easy to correct, that’s fine, but on the factory floor, it’s a different calculus entirely.

When a robot acts on flawed reasoning, the cost isn’t a bad paragraph; it’s a halted production line, a damaged $200,000 piece of equipment, or a worker in harm’s way.

A system that doesn’t inherently understand force, torque, friction, or material behavior cannot reliably make the micro-adjustments that real-world production demands, and in controlled demos, that gap is easy to overlook. In production environments, it becomes a serious liability.

The exposure goes beyond scrap and rework costs, because unpredictable automated behavior introduces genuine safety risk for human operators, and when something goes wrong in an automated system, liability follows, whether regulatory, legal, or reputational.

When ‘good enough’ AI isn’t good enough

Manufacturing leaders don’t evaluate technology based on novelty; they evaluate it based on outcomes, and the outcomes of deploying AI systems that rely on statistical pattern-matching without an understanding of physical laws on the factory floor aren’t just disappointing, they’re expensive.

A robot unable to adapt to minor variability doesn’t produce a suboptimal result; it halts an entire line. Incorrect execution doesn’t just slow things down; it degrades or destroys machinery that costs hundreds of thousands of dollars to replace, and small errors in high-volume production don’t stay small, as they compound across every cycle, every shift, and every batch.

In this environment, “mostly correct” doesn’t hold up; it’s the kind of margin for error that turns into real cost, real downtime, and real risk.

Teaching robots intent, not instructions

For decades, industrial robots have been programmed line-by-line, where every motion is predefined, and every scenario is anticipated in advance. That approach delivers consistency, but only inside the narrow set of conditions it was built to expect, and the moment something deviates, the system doesn’t adapt; it fails.

The next shift in automation isn’t about replacing code with prompts; it’s about moving from rigid instructions to something more fundamental, which is teaching machines intent.

Rather than specifying exactly how a robot should perform every step of a process, manufacturers are beginning to define what needs to be achieved and allowing the system to determine how to get there based on what’s actually happening in real time.

That requires a fundamentally different kind of intelligence, one that understands the physical world well enough to reason through it, not just respond to it.

Why physics-based AI changes the equation

To operate reliably in a physical environment, AI must be grounded in physics, not just trained on operational data, but built on the underlying principles that govern how the real world actually behaves: how materials respond to force, how tools interact with surfaces, and how wear and environmental variation affect outcomes over time.

This is what allows a system to reason about execution rather than simply pattern-match against past inputs.

When conditions change (and in manufacturing, they always do), a physics-based system doesn’t freeze or fail. When a part arrives slightly out of tolerance, it adjusts its approach; when a tool begins to wear, it compensates before quality degrades; and when conditions on the line shift, it recalibrates in real time without waiting for a human to intervene.

The result isn’t just automation; it’s automation that holds up under the variability that defines actual production.

From perfect conditions to real-world production

This is the gap that most automation investments quietly fall into: systems that perform beautifully under controlled conditions and struggle the moment reality asserts itself.

Factories are not controlled environments, and variability shows up everywhere, across materials, suppliers, operators, and machines. The distance between a controlled demo and a live production floor is exactly where inefficiencies accumulate and costs compound.

Physics-based AI is built for that distance, because it doesn’t require perfect inputs to produce reliable outputs; it operates in the conditions manufacturers actually face, not the idealized ones that make for impressive presentations.

What this means for decision makers

For manufacturing leaders evaluating AI, the key question is not whether a system can perform in a demo – it’s whether it can sustain performance in production.

That means asking:

- Does the system understand the physical processes it is controlling?

- Can it adapt to variability without human intervention?

- What happens when conditions deviate from the expected?

- What is the cost of failure – in downtime, damage, and risk?

AI in manufacturing is not just a software decision; it is an operational, financial, and safety decision, and the stakes of getting it wrong are distributed across all three.

The path forward

The industry is moving beyond rigid, instruction-based systems and prompt-driven responses toward a new generation of automation built on machines that truly understand the physics of their environment and can act on that understanding in real time, under real conditions, without relying on human oversight to catch errors.

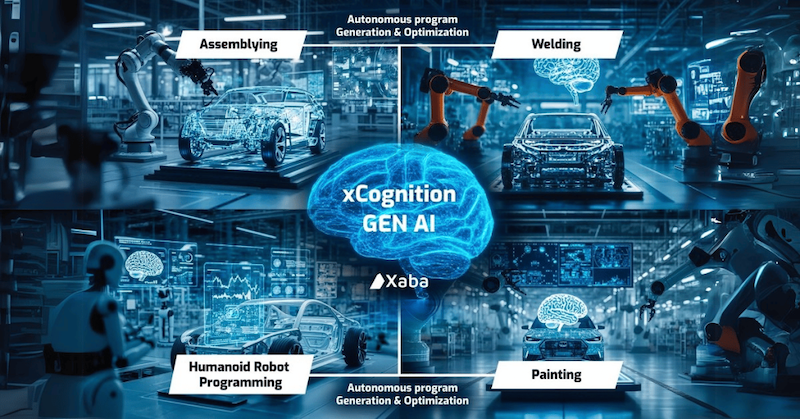

This is where technologies like Xaba’s xCognition Physics-AI Synthetic Brain come in. Unlike copilot AI systems or vision-language models, xCognition is designed to go beyond pattern correlation – addressing the reality that prompts, pixels, and video alone are not sufficient to enable reliable autonomy in industrial robotics.

True autonomy requires physical cognition: an understanding of the underlying dynamics that govern processes like welding, machining, assembly, and material behavior.

By leveraging rich, multi-modal time-series data – load forces, temperatures, voltages, power, acceleration, pressure, and more – this type of physics-AI framework can discover the governing mathematical equations of a system directly from real-world data, rather than approximating behavior from surface-level patterns.

This approach enables machines not just to perceive, but to reason, predict, and act deterministically. The result is a system that delivers:

- Deterministic behavior – consistent outcomes under real-world variability

- Adaptive intelligence – real-time adjustment of parameters and trajectories

- Scalable deployment – transferable knowledge across machines, cells, and factories

In manufacturing, intelligence has never been about generating the right answer. It is about executing the right action, every time, when it counts.

About the author: Massimiliano Moruzzi is CEO and co-founder of Xaba.ai, specializing in AI-driven industrial automation and robotics. He previously held senior engineering and scientific roles at Autodesk, Magestic, and Ingersoll Machine Tools.