The Allen Institute for AI (Ai2) has released MolmoAct 2, an open-source robotics foundation model designed to improve how robots perform real-world physical tasks, as researchers continue pushing beyond highly controlled laboratory demonstrations toward more adaptable automation systems.

The new model, announced this week by the Seattle-based AI research organization, is positioned as a major upgrade to its earlier MolmoAct system and reflects a broader industry effort to develop more general-purpose robotics AI capable of adapting to changing environments without extensive task-specific programming.

Ai2 describes MolmoAct 2 as an “open foundation for robots that work in the real world”, arguing that many current robotics systems remain too brittle and heavily tuned for narrow applications.

“AI writes our emails, debugs our code, and books flights for us. In the physical world, though, it still struggles,” Ai2 said in a blog post announcing the release.

“Getting a robot to reliably load a dishwasher or prep test tube samples in a lab is still far beyond what most systems can dependably do for hours on end.”

Unlike many robotics models that rely heavily on fixed routines or extensive per-task tuning, MolmoAct 2 uses what Ai2 calls an “Action Reasoning Model” architecture that allows the system to reason about three-dimensional environments before acting.

According to the company, the model can perform a range of manipulation tasks “out of the box”, including bimanual operations such as towel folding, object sorting, tray lifting, and table clearing.

Ai2 says the model also delivers substantially faster inference performance than the original MolmoAct system, allowing more responsive robot control.

“A single action call takes about 180 ms in the base model and 790 ms in MolmoAct 2 with adaptive depth reasoning, versus 6,700 ms in MolmoAct,” the company said.

The organization claims the speed improvement enables near real-time robot behavior rather than visibly delayed movements between actions.

The release includes full model weights, datasets, and an open-source robotics action tokenizer, reflecting Ai2’s emphasis on open AI development in robotics – an area where many leading systems remain proprietary.

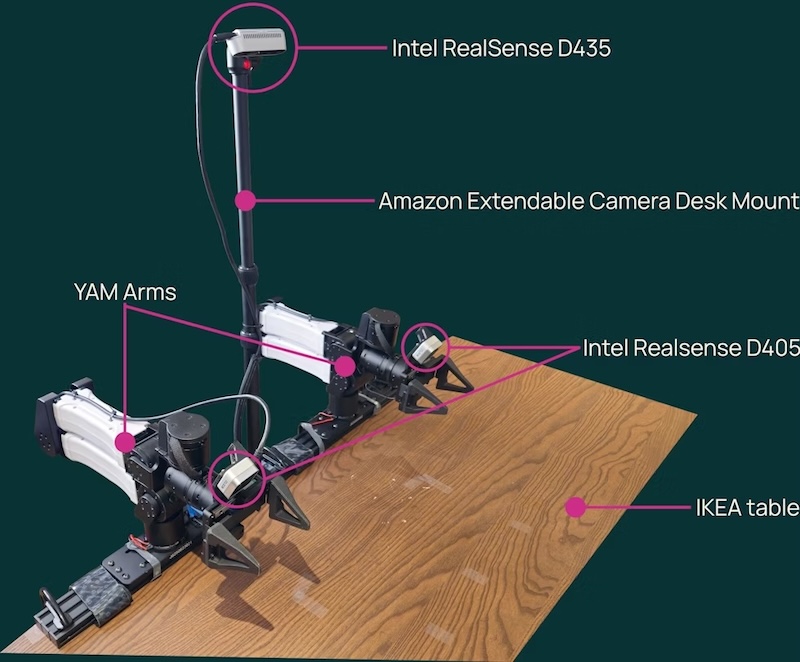

Alongside the model, Ai2 has also released the MolmoAct 2-Bimanual YAM dataset, which it describes as “the largest open-source bimanual tabletop manipulation robotics dataset ever published”, containing more than 720 hours of robot demonstrations.

The demonstrations include coordinated two-arm tasks such as:

- folding towels;

- scanning groceries;

- charging smartphones; and

- clearing tables.

Ai2 says the model performed strongly across both simulated and real-world robotics evaluations.

In tests using a Franka robot arm, MolmoAct 2 reportedly achieved high success rates across manipulation tasks including moving objects into bowls, placing pipettes into trays, and inserting objects into confined spaces.

The company also said the system outperformed several competing robotics models in third-party evaluations conducted by Cortex AI.

One of the more notable aspects of the release is its early use in scientific research environments.

Ai2 said researchers at Stanford School of Medicine are piloting MolmoAct 2 in CRISPR gene-editing workflows within a “self-driving wetlab” project led by Professor Le Cong.

According to Ai2, the robot system is being used to automate repetitive laboratory manipulation tasks such as moving samples between stations and operating benchtop equipment.

The company said the work highlights the potential for robotics foundation models to accelerate scientific research by automating repetitive laboratory procedures.

“After testing a range of generalist robotics models fine-tuned to their workflow, the Stanford team found that MolmoAct 2 shows strong potential to streamline key parts of wetlab operations and, in turn, accelerate scientific discovery,” Ai2 said.

Despite the progress, Ai2 acknowledged that the system still has limitations.

The company said MolmoAct 2 currently plans batches of robot actions rather than continuously adapting motion in real time, which can reduce responsiveness during unexpected events.

It also remains limited to robot platforms it was specifically trained on, requiring additional training for deployment on substantially different hardware configurations.

Still, the release reflects growing momentum behind open robotics foundation models as researchers attempt to build systems capable of operating more flexibly in real-world environments.

“The real test for any robotics model is whether it works outside controlled environments, where instructions vary and small mistakes can compound over time,” Ai2 said.

MolmoAct 2, datasets, technical reports, and code are now publicly available through Ai2’s research platform.