If you work in robotics, odds are you’re more interested in robots than data pipelines and cloud architecture.

Unfortunately, the latter are just as much a part of modern robotics as sensors and servo motors. If you hope to successfully develop and scale your robotics operations, you’ll first have to run the gauntlet that is building a cloud-based “backend” – the server side of the network.

Given the inherent complexity of this task, there are plenty of opportunities for oversight. So, before all else, it’s imperative that you and your team take the time to carefully consider and develop a plan of action.

To help you out with that process, Formant, a provider of a data platform for robot fleets, has created the following list of 10 crucial things to keep in mind when developing your cloud-based backend.

1. Selecting the right service provider isn’t easy

Despite there being only 3 major cloud service providers (CSPs) on the market today – Amazon Web Services (AWS), Google Cloud, and Microsoft Azure – selecting one isn’t necessarily easy.

Remember, you’re essentially selecting the foundation on which your operations will be built; and each of these three foundations possess a variety of subtle (and some not-so subtle) differences, which can directly impact your company’s overall success.

When selecting a CSP, it’s important that you first differentiate between your own, internal development needs, and those of your customers. In doing so, you will undoubtedly identify a multitude of points in which they aren’t aligned.

So, it’s imperative that one carefully consider, prioritize, and strike a balance between your own needs and those of your clientele.

What that balance ultimately looks like will depend upon your business and application. However, there are some fundamental ideas one should keep in mind while working out that personalized solution.

First, make sure to consider both your existing customer base and the customer base you hope to establish in the future.

For example, if AWS is the best fit for your team, but your largest existing client happens to be one of Microsoft’s 64,000 Certified Partner companies, then you will have to seriously consider adopting Azure instead.

Alternatively, Imagine you decide to deploy your product using AWS, then, a year later, you attract the interest of a customer whose IT department only works with Google Cloud.

If that customer represents a significant opportunity for your business, then you should be prepared to adapt.

In this case, however, the solution would mean adopting a multi-cloud model. One may also find customers that might require a private deployment in a public cloud, or even a private cloud entirely. Whatever your future may hold, just keep in mind that you should remain open to the idea of multi-cloud support.

2. Traditional observability tools aren’t robot-data friendly

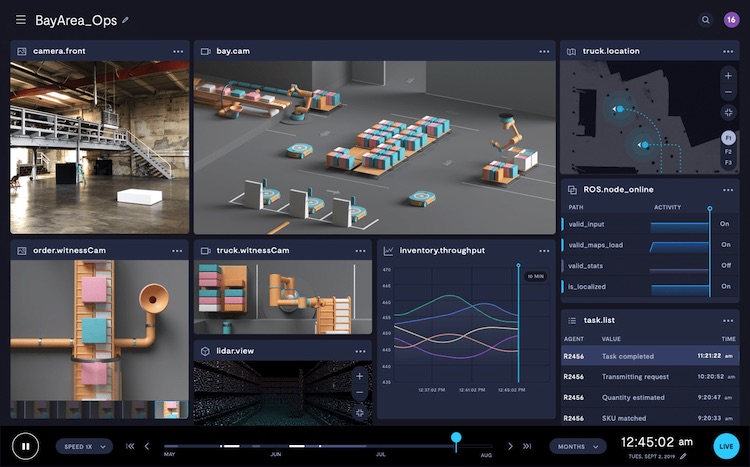

Robotic data is unique among the world’s many data sources. In addition to typically being more voluminous, it comes in a much wider variety of formats.

While most data sources generate text and metric data, a robot often must transmit location data, image data, 3-dimensional data (for example, lidar and SLAM visualization), and streaming video data, to just name a few.

Tools like Grafana, Elastic Search, Splunk, Datadog and Prometheus are excellent when dealing with text or metrics. But, just like existing cloud technologies, most of these tools don’t support robot shaped data.

In fact, none of the tools listed above support common robotic data formats like images, video, point clouds, and 3D.

It is important that the robot- specific data types are visualized because a lot of times, this is the only way to see and understand what the robot is seeing.

For example, imagine a case where your robot is mislocalized and its sensor data and real world positions are out of sync.

How do we ensure that it matches without really visualizing the point cloud and other data used in sensor fusion?

The same applies to 3D transforms or camera view. So, what’s the solution? You guessed it. You’ll need to develop your own in-house software in order to make much of your data accessible.

3. Traditional cloud data pipeline tools aren’t that friendly either

While there are plenty of native cloud data pipeline tools out there, it’s unlikely that your robot-specific data application will make for an easy integration.

This is primarily because of the shape and variety of robot data and systems. When selecting your data pipeline tools, it is important to keep your medium and long-term business goals in mind — rather than simply your immediate needs.

That means, first and foremost, making sure that your selected tools can be used across the major cloud platforms. As we explained in consideration #1, you may find yourself needing to adopt multiple cloud platforms at some point.

When doing so, you definitely don’t want to render your existing tools obsolete. So, avoid native tools and opt for generic solutions that are transferable between different clouds.

Finally, it is also important to be able to combine data coming from your system (like system metrics, logs, etc.) with data coming out of your robot. It is, hence, important to expose interfaces that support ROS and non-ROS based data types.

4. Connectivity can be spotty

Unlike servers, robots don’t typically stay put. And because of that mobility, they are particularly vulnerable to connectivity issues. The quality of a robot’s connection is also heavily influenced by its immediate physical location.

When developing your data pipeline, it’s essential that you prepare for unreliable connectivity. Data needs to be buffered, prioritized, or even stored on disk when connectivity issues arise.

Also of consideration, here is the capability to deal with out-of-order data and historical ingestion. Importantly, you also want to ensure that you include these as part of your test cases. Take spotty connectivity into consideration from step one!

5. Bandwidth is expensive

Because robots rarely operate in fixed positions, inexpensive connectivity solutions such as Ethernet and Wi-Fi simply aren’t viable. Which leaves you with the much more expensive option of cellular / LTE.

When building your backend, one must carefully consider what data and at what resolution one will be transmitting.

Ultimately, one must weigh cost against functionality or data richness. The best robotic cloud backends will allow you and your team to tweak that trade-off over time, as your business scales.

You will also want the ability to tweak that trade-off in real-time. Meaning, your backend should allow you to establish both manual and scheduled/automated events or triggers, which allow one to modulate bandwidth usage based upon predetermined variables.

For example, one might set up an automated event that initiates software/firmware downloads whenever the robot is back at its dock/base where connectivity is good.

One could also schedule these events to occur during ”off-peak” hours, as many cellular providers offer reduced data rates at these times.

Another example would be a trigger that initiates the ingestion of media data only when certain conditions are met, such as when an e-stop occurs, or when autonomy mode is triggered.

Such a trigger would allow a business to financially optimize their data usage, by restricting the use of (the data-cap devourer that is) video to only high-priority, high-stakes situations.

6. Build your backend with analytics already in mind

In the context of robotics, analytics is essentially turning raw data originating from robots into insights for making better decisions – identify inefficiencies, monitor trends, diagnose unique technical failures, or showcase fleet efficiency and progress.

It should come as no surprise, then, that as the robot fleet scales, the analytics data pipeline becomes more challenging.

These challenges come in many forms, including connectivity, data wrangling, data management, and developing a big data ETL pipeline by which to effectively aggregate the firehose of data, including out-of-order sub-second data, being emitted by every robot in your fleet.

At the same time that analytics becomes more challenging, however, it also becomes more valuable. And even at this relatively nascent point in robotics’ development, high-fidelity analytics has already proven itself to be an essential ingredient for business success in the field.

So, ensure that your backend is ready and able to capitalize on analytics, both immediately and moving forward.

7. Think about security from the onset

When designing the cloud data pipeline, it is important to consider security from the onset and enable trust within the systems and data.

Security must be considered and designed in the following areas: data transport and storage, user/robot authentication and authorization, network protocols, and API design.

Starting at provisioning, one has to ensure that every request coming from the robot is signed. The security design should provide protection against DDoS attacks, allow one to easily blacklist bad IPs, and enforce other security-based rules.

It’s also imperative that every API call is authenticated and authorized using the client’s robot, user, or service IDs.

Furthermore, virtually all traffic should be encrypted, and all API interactions and data points should be associated with either an individual user or device.

Put simply, security shouldn’t be an afterthought. Security should be part of virtually every conversation you have when developing your robotic application. To learn more about how Formant built end-to-end security and identity for robotics systems, read this blog.

8. Regulatory compliance is not optional

In recent years, a whole host of regulations and laws have been created to regulate the flow, retention, and storage of data.

These refer to regulations that a business must follow in order to ensure the sensitive digital assets it possesses are guarded against loss and misuse.

In the context of robotics and automation, society’s increasing anxiety surrounding privacy in the world of tech, seems to take on even more gravity.

With this in mind – not to mention one’s inherent responsibility for the privacy of customer data – one shouldn’t cut any corners when it comes to privacy.

For example, robots that work in the warehouse could capture the identity of employees and visitors using its camera. It is ideal that these images go through a de-identification process as part of the data pipeline before it leaves the robot.

It is important to consider these regulations before any data is uploaded to the cloud. Depending on the country your potential customers are located in, you might have to ensure that your solution satisfies local regulations.

Some common examples to be aware of include the European Union’s General Data Protection Regulation (GDPR) and HIPAA (if you are in the health industry).

9. Integrations

There are at least three different personas who will be accessing the data from your cloud – the engineers for root causing problems, the operators to ensure the robots continue to function in the field and the executives who need this data to make impressions to prospects and investors.

These personas might want solutions that can easily integrate with their existing productivity tools.

That means you will require a backend architecture that is easily integrated into a variety of different tools, ranging from ZenDesk, PagerDuty, Slack, Grafana to existing BI tools like Tableau and Looker.

Many customers might even want integrations with their LDAP/AD tools for better role-based access control.

Some of your customers might even want to white label the solution for their customers, and so ensuring that your data pipeline can integrate into this wide variety of productivity and monitoring tools is important.

10. Experts not included

And now for the bad news. In order to implement such a robotics-ready backend, you’ll need to hire more than a few experts.

The amount of specialized talent required to get such a backend up and running is considerable, but maintaining, updating, and scaling such a system is even more daunting. As your robotics business scales, so will your cloud backend.

This includes adopting multi-region and multi-cloud solutions and keeping up with rapidly changing technologies.

Unfortunately, none of these considerations have easy solutions, but at the end of the day, it’s a build v/s buy decision. If you plan to build this on your own, fire up your HR engine and start seeking out a team that’s capable of handling such heavy lifting.

Robotics ≠ Robots

When you hear the word “robotics” it’s pretty safe to assume that the first thing that comes to your mind isn’t cloud architecture.

However, practically every modern-day robotics application would be rendered unworkable without some form of cloud connectivity. As our industry develops, it becomes increasingly clear that robots themselves are not solutions.

Instead, they are simply parts within a much larger architecture or mechanism. If a robot is a screwdriver, then your backend is the human that turns it.

But, if you’re in the business of inventing screwdrivers, training humans probably sounds like nothing more than a distraction.

Truth be told, it is. But, it’s an increasingly necessary one nonetheless. Platforms like Formant were designed to remove these elements from your daily operations, and allow you to focus on your core business.

Having already navigated this gauntlet ourselves, we offer your business a way to outsource these headaches and get back to serving your customers.