AI-driven brute force is a problem that’s only getting worse. According to a recent study, cyberattacks using AI and ML have risen by 89% year-over-year as of early 2026, with around 11,000 attacks taking place per second.

What’s more, the technology behind these attacks is only improving, and so, while the volume rises, so too does the effectiveness with which these attacks bypass security measures and exploit vulnerabilities.

Protection Through Rate Limiting

One of the ways in which these attacks are dealt with is known as rate limiting. For those unaware, this is the process of controlling how frequently a user or system can make requests to a platform within a set period of time.

By setting thresholds for request frequency, it aims to prevent attackers from overwhelming servers and effectively slow down brute-force attempts, and this is something many companies have adopted as the main line of defence in their security strategy.

But there’s a problem: in a world where AI-driven brute force is highly adaptive, there’s only so much that traditional rate limiting can do. Indeed, for teams looking to stop brute force attacks, bot detection and mitigation must be far more dynamic and intelligent, with real-time monitoring and behavioral analysis to get to the root of the threat.

The Problem With Rate Limiting

Let’s say an attacker is using a distributed network of thousands of AI-powered bots, each assigned a unique IP and session. What’s more, each bot operates just under the rate-limit threshold, sending requests in a slow, human-like pattern that appears legitimate in isolation.

Because rate limiting works by monitoring individual IP addresses, accounts, and sessions, and temporarily blocking requests that exceed pre-set thresholds, no single bot in this scenario would trigger the rate limiting, and yet collectively, the network is performing millions of actions simultaneously.

In other words, the problem with rate limiting is that it assumes that malicious activity originates from one or a few sources acting too quickly – those sources are exceeding the allowed thresholds, and therefore they must be abusive. AI-driven attacks, however, break this assumption.

By spreading activity across a vast number of sources and accurately mimicking human behavior in the process, they’re essentially evading traditional thresholds and blending in with normal traffic, meaning the attack can continue uninterrupted without any single IP or session being blocked.

What is Modern Rate Limiting?

It’s not such a bad thing that rate limiting – in its traditional form – is dead. If anything, it’s to be expected. The world of cybersecurity is constantly evolving, and essential cybersecurity practices that might have worked a few years ago don’t necessarily remain effective when the threat landscape is evolving at just as rapid a pace.

The problematic thing is that many companies still hold on to it like it’s a silver bullet. In 2026, nearly all major API providers and public-facing web services are using rate limiting as a practice, with the approach being nearly universal in enterprise-grade web APIs, cloud platform security, and SaaS products.

Against AI brute-force attacks, however, the cracks are inevitably going to show, and that’s why it’s so important for security teams to realize that there is a better option.

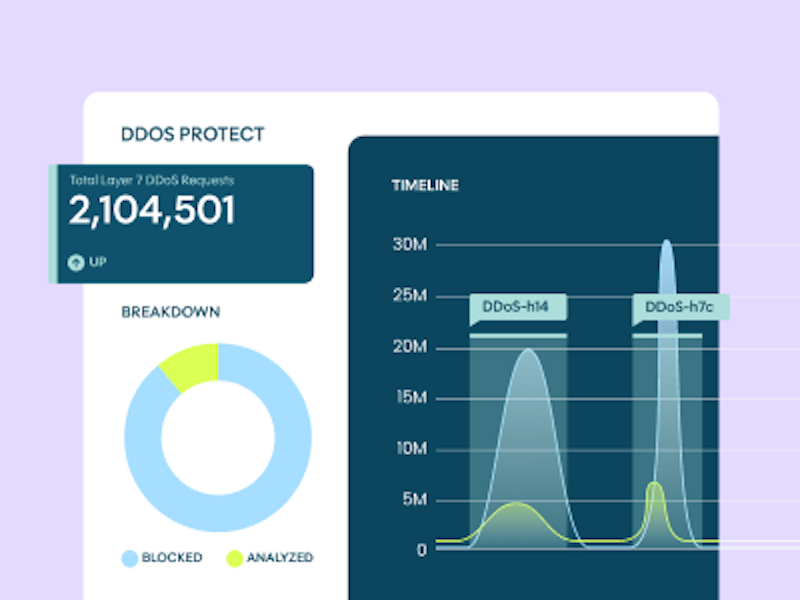

Modern rate limiting isn’t so different to traditional rate limiting, but the key distinction is that it lets you define custom response policies based on request volume within a time window – for instance, per hour or per day – and is integrated with AI-driven bot detection.

This means that when a distributed brute-force attack is underway, where thousands of bots are spreading requests across multiple IPs, the system can identify which requests are likely automated and throttle them selectively, rather than blindly blocking traffic based on simple criteria – importantly, this doesn’t just protect your systems, it also helps optimize the customer journey by ensuring real customers gain fast, uninterrupted access to the platform, which is critical for maximizing conversions.

How Modern AI Bot Management Works

It can do this through a few key mechanisms:

Behavioral Analysis

Where user interactions are monitored to detect automated behavior – a key cybersecurity term to know here is “behavioral biometrics”, whereby patterns like typing rhythm, mouse movements, and navigation speed distinguish humans from bots.

Device Fingerprinting

Where hundreds of device-level attributes are collected to create a unique identifier.

Signature Detection

Where known bot frameworks and automation tools are identified by patterns in HTTP headers and TLS fingerprints.

Reputational Detection

Where IP addresses and data center origins are evaluated against historical threat data.

Intent Analysis

Where the system distinguishes between benign automation and malicious activities.

Together, these mechanisms not only work to identify and block AI brute-force attacks, but adapt continuously to new threats, effectively creating a constantly moving, evolving defence system that doesn’t grow obsolete.

As we mentioned previously, in a landscape where attackers are highly adaptive, this becomes even more important, but not just this: it puts control and insight back into the hands of the security team.

You can decide what traffic gets prioritized, and who gets limited or blocked. You can see what attacks are being attempted and what legitimate activity is occurring. In other words, it turns an ordinary defensive measure into an intelligent, proactive system that both protects and empowers those who use it.