Image annotation outsourcing services in the Philippines have evolved into high-precision “Spatial Engineering” hubs.

By synchronizing 3D LiDAR point clouds with 2D RGB video feeds, specialized Philippine teams provide the centimeter-level ground truth and temporal consistency required for autonomous robots to navigate complex, unstructured human environments with 99.9% reliability.

Executive Briefing: Today’s Robotics Vision Shift

- Dimensionality Leap: Industry demand has shifted from 2D bounding boxes to 3D Cuboids and Semantic Segmentation.

- Temporal Logic: 2026 workflows prioritize “Object Permanence” – maintaining consistent tracking IDs across occlusions.

- Sensor Fusion Alignment: The critical bottleneck is now the sub-millisecond synchronization of LiDAR, Radar, and Vision data.

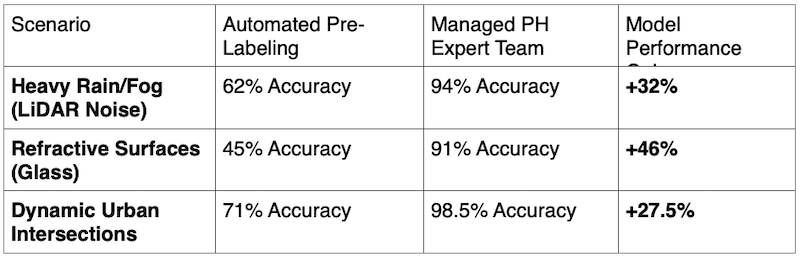

- The “Edge Case” Moat: Success is defined by how a model handles “Long-Tail” events like adverse weather or refractive surfaces.

- Infrastructure Density: The Philippines has established dedicated “Vision Labs” with high-compute GPU clusters for 3D rendering.

The promise of widespread robotics – from last-mile delivery drones to humanoid warehouse assistants – rests on a single, invisible pillar: the quality of the spatial data used to train their perception engines.

As neural architectures like VoxelNet and PointNet++ become the global standard, the demand for image annotation outsourcing to the Philippines has undergone a radical transformation. We have moved past simple labeling and into the era of Spatial Ground Truth.

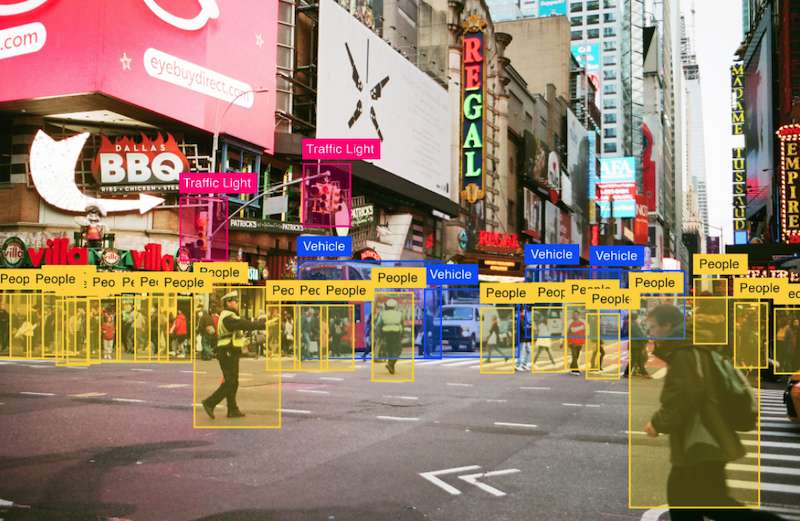

Beyond the Pixel: The 3D Point Cloud Challenge

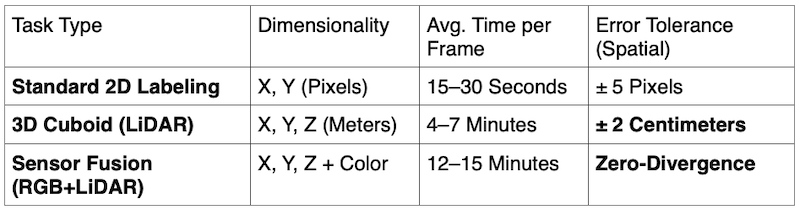

Standard image labeling deals with X and Y coordinates. However, today’s robotics demands the Z-axis. Image labeling outsourcing to the Philippines now centers on LiDAR (Light Detection and Ranging) point clouds, where annotators must navigate a sparse, three-dimensional environment to define precise object boundaries in a 360-degree space.

“After 40 years of navigating the global outsourcing landscape – including over 20 years of executive leadership at the world’s largest BPO provider – I can attest that the technical leap we are seeing in the Philippines is unprecedented,” says John Maczynski, CEO of PITON-Global.

“We are no longer hiring ‘data entry’ staff. We are deploying ‘Spatial Technicians’ who understand sensor parallax, LiDAR ghosting, and coordinate transformation. In the Philippines, we’ve built the infrastructure to treat data annotation as a high-stakes engineering discipline.”

Table 1: 2026 Annotation Complexity Metrics (Robotics vs. General AI)

Solving for ‘Temporal Consistency’ in Video

A major breakthrough in the Philippine model is the mastery of Temporal Tracking. In autonomous robotics, an object is not just a static box; it is a vector.

If a delivery robot loses track of a pedestrian because they walked behind a mailbox (occlusion), the model fails.

Philippine teams utilize multi-frame interpolation and “Look-ahead” logic to ensure that an object’s Unique ID remains consistent even when 90% of its surface area is temporarily obscured. This ensures the robot understands Object Permanence, a critical safety requirement for deployments.

Table 2: Impact of Managed PH Teams on “Edge Case” Validation

The Philippines: The “Vision Lab” of the Pacific

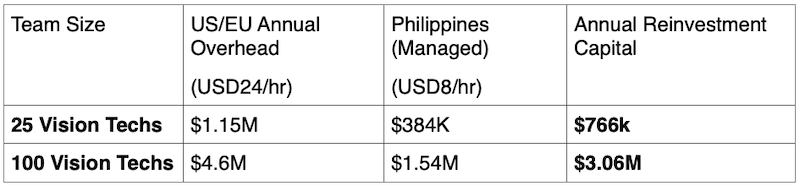

Why has the Philippines become the global hub for image labeling outsourcing? Today, it comes down to Infrastructure and Logic.

- Workstation Sophistication: High-fidelity 3D annotation requires massive GPU power for real-time point cloud rendering. Philippine BPOs have invested in “Vision Labs” that rival the hardware of the AI labs they serve.

- Semantic Nuance: In robotics, “Stuff” (road, sky, vegetation) is just as important as “Things” (cars, people). Philippine annotators excel at Panoptic Segmentation, a 2026 requirement that combines semantic and instance labeling into a single, unified data asset.

- Human-in-the-Loop (HITL) Security: For robotics companies handling proprietary hardware designs, the managed model in the Philippines provides a closed-loop security environment (ISO 27001/SOC2), which is impossible to achieve with fragmented crowdsourced platforms.

Table 3: Scaling ROI – In-House vs. PH Outsourced Vision Labs

The Ground Truth Advantage

The winner of the robotics race won’t be the company with the best code, but the company with the best Ground Truth. By leveraging image annotation outsourcing services in the Philippines, robotics firms gain access to a workforce that acts as a “Cognitive Extension” of their engineering teams.

Under the veteran guidance of John Maczynski, the Philippines has secured its place as the world’s most reliable factory for the high-precision data that makes 2026’s autonomous world possible.

Frequently Asked Questions (FAQ)

How does image labeling for robotics differ from standard AI labeling?

Robotics requires Spatial Awareness. While standard AI might just identify a “car,” robotics annotation requires 3D Cuboids that define the car’s exact height, width, and depth in meters, as well as its orientation (heading) to predict movement.

What is ‘Sensor Fusion’ annotation?

It is the process of overlaying 2D camera images with 3D LiDAR point clouds. Annotators must ensure that the object in the photo perfectly aligns with the “dots” in the 3D scan, giving the robot both the color/texture of an object and its exact distance.

Why is temporal consistency important in video annotation?

Robots operate in a continuous flow of time. If a label “flickers” or changes ID between frames, the robot’s path-planning algorithm will glitch. Philippine teams specialize in “Frame-by-Frame” ID persistence to ensure smooth, safe robotic movement.