Researchers create new simulation tool for robots to manipulate complex fluids in a step towards helping robots more effortlessly assist with daily tasks that deal with liquids

Imagine you’re enjoying a picnic by a riverbank on a windy day. (Why you chose to do this on a windy day was your own poor decision).

A gust of wind accidentally catches your paper napkin and lands on the water’s surface, quickly drifting away from you. You grab a nearby stick and carefully agitate the water to retrieve it, creating a series of small waves.

These waves eventually push the napkin back towards the shore, so you grab it. In this scenario, the water acts as a medium for transmitting forces, enabling you to manipulate the position of the napkin without direct contact.

Humans regularly engage with various types of fluids in their daily lives—a formidable and elusive goal (for obvious reasons) for current robotic systems. Hand me my latte? Sure. Make it? That’s going to require a bit more nuance.

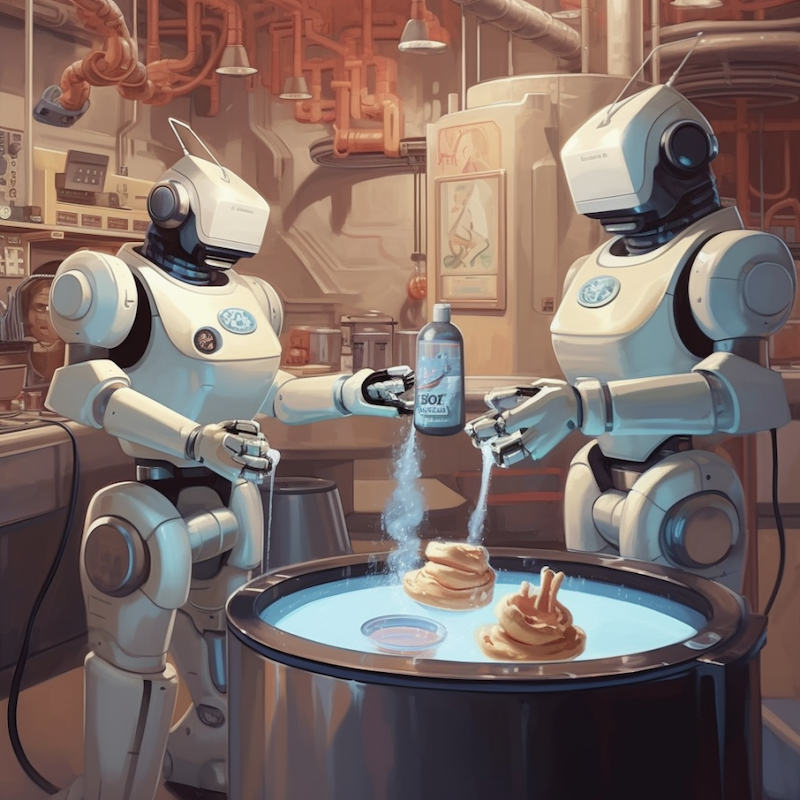

FluidLab, a new simulation tool from MIT CSAIL, enhances robot learning for complex fluid manipulation tasks like making latte art, ice cream, and even manipulating air.

The virtual environment offers a versatile collection of intricate fluid handling challenges, involving both solids and liquids, and multiple fluids simultaneously. FluidLab supports modeling solid, liquid, and gas, including elastic, plastic, rigid objects, Newtonian and non-Newtonian liquids, and smoke and air.

At the heart of FluidLab lies FluidEngine, an easy to use physics simulator capable of seamlessly calculating and simulating various materials and their interactions, all while harnessing the power of graphics processing units (GPUs) for faster processing.

The engine is “differential”, meaning the simulator can incorporate physics knowledge for a more realistic physical world model, leading to more efficient learning and planning for robotic tasks.

In contrast, most existing reinforcement learning methods lack that world model that just depends on trial and error. This enhanced capability, says the researchers, lets users experiment with robot learning algorithms and toy with the boundaries of current robotic manipulation abilities.

To set the stage, the researchers tested said robot learning algorithms using FluidLab, discovering and overcoming unique challenges in fluid systems. By developing clever optimization methods, they’ve been able to transfer these learnings from simulations to real-world scenarios effectively.

Visiting researcher at MIT CSAIL and research scientist at MIT-IBM Lab Chuang Gan, the senior author on a new paper about the research, says: “Imagine a future where a household robot effortlessly assists you with daily tasks, like making coffee, preparing breakfast, or cooking dinner.

“These tasks involve numerous fluid manipulation challenges. Our benchmark is a first step towards enabling robots to master these skills, benefiting households and workplaces alike.

“For instance, these robots could reduce wait times and enhance customer experiences in busy coffee shops. FluidEngine is, to our knowledge, the first-of-its-kind physics engine that supports a wide range of materials and couplings while being fully differentiable.

“With our standardized fluid manipulation tasks, researchers can evaluate robot learning algorithms and push the boundaries of today’s robotic manipulation capabilities.”

Fluid fantasia

Over the past few decades, scientists in the robotic manipulation domain have mainly focused on manipulating rigid objects or very simplistic fluid manipulation tasks like pouring water. Studying these manipulation tasks involving fluids in the real world can also be an unsafe and costly endeavor.

With fluid manipulation, it’s not it’s not always just about fluids, though. In many tasks, such as creating the perfect ice cream swirl, mixing solids into liquids, or paddling through the water to move objects, it’s a dance of interactions between fluids and various other materials.

Simulation environments must support “coupling”, or how two different material properties interact. Fluid manipulation tasks usually require pretty fine-grained precision, with delicate interactions and handling of materials, setting them apart from straightforward tasks like pushing a block or opening a bottle.

FluidLab’s simulator can quickly calculate how different materials interact with each other.

Helping out the GPUs is “Taichi”, a domain-specific language embedded in Python. The system can compute gradients (rates of change in environment configurations with respect to the robot’s actions) for different material types and their interactions (couplings) with one another.

This precise information can be used to fine-tune the robot’s movements for better performance. As a result, the simulator allows for faster and more efficient solutions, setting it apart from its counterparts.

The ten tasks the team put forth fell into two categories: using fluids to manipulate hard-to-reach objects, and directly manipulating fluids for specific goals.

Examples included separating liquids, guiding floating objects, transporting items with water jets, mixing liquids, creating latte art, shaping ice cream, and controlling air circulation.

Carnegie Mellon University (CMU) PhD student Zhou Xian, another author on the paper, says: “The simulator works similarly to how humans use their mental models to predict the consequences of their actions and make informed decisions when manipulating fluids. This is a significant advantage of our simulator compared to others.

“While other simulators primarily support reinforcement learning, ours supports reinforcement learning and allows for more efficient optimization techniques. Utilizing the gradients provided by the simulator supports highly efficient policy search, making it a more versatile and effective tool.”

FluidLab’s future looks bright. The current work attempted to transfer trajectories optimized in simulation to real-world tasks directly in an open-loop manner.

For next steps, the team is working to develop a closed-loop policy in simulation that takes as input the state or the visual observations of the environments and performs fluid manipulation tasks in real-time, and then transfers the learned policies in real-world scenes.

The platform is publicly available, and researchers hope it will benefit future studies in developing better methods for solving complex fluid manipulation tasks.

Ming Lin, University of Maryland computer science professor, says: “Humans interact with fluids in everyday tasks, including pouring and mixing liquids (coffee, yogurts, soups, batter), washing and cleaning with water, and more.

“For robots to assist humans and serve in similar capacities for day-to-day tasks, novel techniques for interacting and handling various liquids of different properties (for example, viscosity and density of materials) would be needed and remains a major computational challenge for real-time autonomous systems.

“This work introduces the first comprehensive physics engine, FluidLab, to enable modeling of diverse, complex fluids and their coupling with other objects and dynamical systems in the environment.

“The mathematical formulation of ‘differentiable fluids’ as presented in the paper makes it possible for integrating versatile fluid simulation as a network layer in learning-based algorithms and neural network architectures for intelligent systems to operate in real-world applications.”

Gan and Xian wrote the paper alongside Dartmouth College assistant professor Bo Zhu, Columbia University PhD student Zhenjia Xu, MIT Brain and Cognitive Sciences postdoc Hsiao-Yu Tung, MIT EECS and CSAIL Principal Investigator Antonio Torralba, and CMU assistant professor Katerina Fragkiadaki.

The team’s research is supported by MIT-IBM Watson AI Lab, Sony AI, a DARPA Young Investigator Award, an NSF CAREER award, an AFOSR Young Investigator Award, DARPA Machine Common Sense, and the NSF. The research was presented at the International Conference on Learning Representations this May.