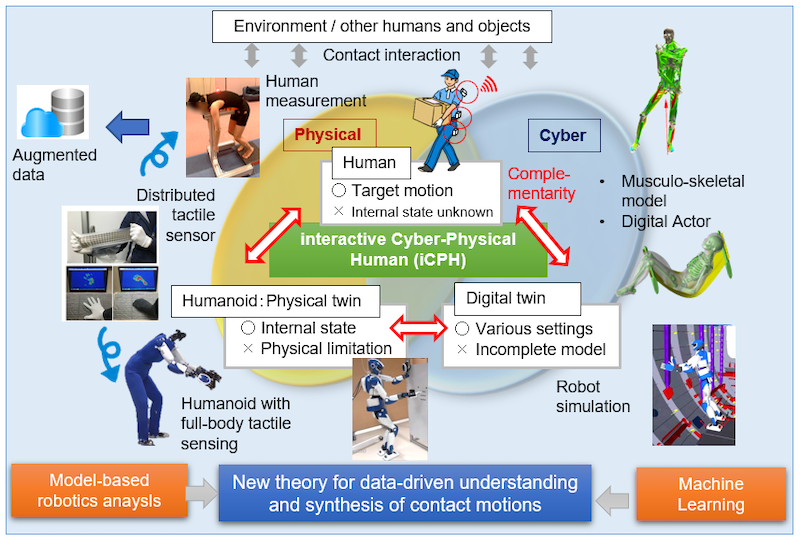

Performing human-like motions that involve multiple contacts is challenging for robots. In this regard, a researcher from the Tokyo University Science has envisioned an interactive cyber-physical human (iCPH) platform with complementary humanoid (physical twin) and simulation (digital twin) elements.

iCPH combines human measurement data, musculoskeletal analysis, and machine learning for data collection and augmentation. As a result, iCPH can understand, predict, and synthesize whole-body contact motions.

Humans naturally perform numerous complex tasks. These include sitting down, picking something up from a table, and pushing a cart. These activities involve various movements and require multiple contacts, which makes it difficult to program robots to perform them.

Recently, Professor Eiichi Yoshida of the Tokyo University of Science has put forward the idea of an interactive cyber-physical human (iCPH) platform to tackle this problem.

It can help understand and generate human-like systems with contact-rich whole-body motions. His work was published in Frontiers in Robotics and AI.

Professor Yoshida describes the fundamentals of the platform. “As the name suggests, iCPH combines physical and cyber elements to capture human motions,” he says.

“While a humanoid robot acts as a physical twin of a human, a digital twin exists as a simulated human or robot in cyberspace. The latter is modeled through techniques such as musculoskeletal and robotic analysis. The two twins complement each other.”

This research raises several key questions. How can humanoids mimic human motion? How can robots learn and simulate human behaviors? And how can robots interact with humans smoothly and naturally?

Professor Yoshida addresses them in this framework. First, in the iCPH framework, human motion is measured by quantifying the shape, structure, angle, velocity, and force associated with the movement of various body parts.

In addition, the sequence of contacts made by a human is also recorded. As a result, the framework allows the generic description of various motions through differential equations and the generation of a contact motion network upon which a humanoid can act.

Second, the digital twin learns this network via model-based and machine learning approaches. They are bridged together by the analytical gradient computation method. Continual learning teaches the robot simulation how to perform the contact sequence.

Third, iCPH enriches the contact motion network via data augmentation and applies the vector quantization technique. It helps extract the symbols expressing the language of contact motion.

Thus, the platform allows the generation contact motion in inexperienced situations. In other words, robots can explore unknown environments and interact with humans by using smooth motions involving many contacts.

In effect, the author puts forward three challenges. These pertain to the general descriptors, continual learning, and symbolization of contact motion. Navigating them is necessary for realizing iCPH. Once developed, the novel platform will have numerous applications.

Professor Yoshida, on the applications of iCPH, says: “The data from iCPH will be made public and deployed to real-life problems for solving social and industrial issues. Humanoid robots can release humans from many tasks involving severe burdens and improve their safety, such as lifting heavy objects and working in hazardous environments.

“iCPH can also be used to monitor tasks performed by humans and help prevent work-related ailments. Finally, humanoids can be remotely controlled by humans through their digital twins, which will allow the humanoids to undertake large equipment installation and object transportation.”

Using the iCPH as ground zero and with the help of collaborations from different research communities, including robotics, artificial intelligence, neuroscience, and biomechanics, a future with humanoid robots is not far.