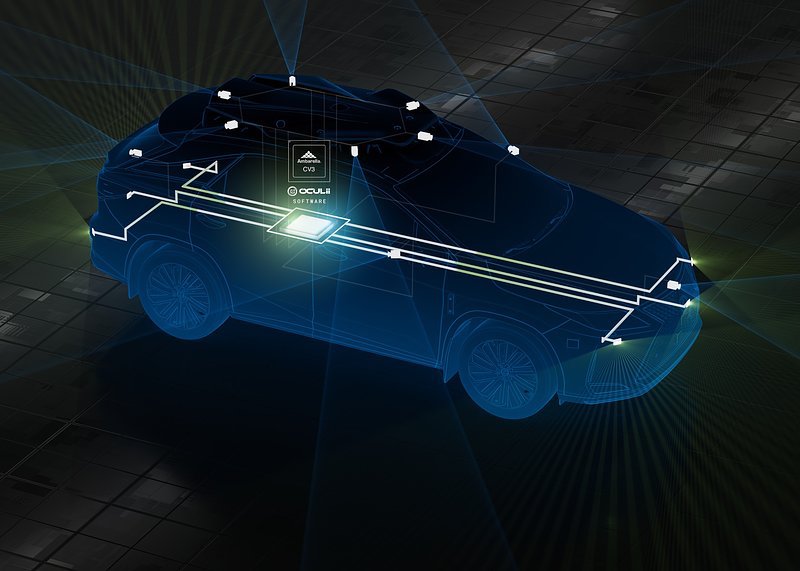

Ambarella, an edge AI semiconductor company, has launched what it says is “the world’s first centralized 4D imaging radar architecture”, which allows both central processing of raw radar data and deep, low-level fusion with other sensor inputs – including cameras, lidar and ultrasonics.

The company says this is a breakthrough architecture which provides greater environmental perception and safer path planning in AI-based ADAS and Level 2+ to L5 autonomous driving systems, as well as autonomous robotics.

It features Ambarella’s Oculii radar technology, including the only AI software algorithms that dynamically adapt radar waveforms to the surrounding environment—providing high angular resolution of 0.5 degrees, an ultra-dense point cloud up to tens of thousands of points per frame and a long detection range up to 500+ meters.

All of this is achieved with an order of magnitude fewer antenna MIMO channels, which reduces the data bandwidth and achieves significantly lower power consumption than competing 4D imaging radars.

Ambarella says its centralized 4D imaging radar with Oculii technology provides a flexible and high performance perception architecture that enables system integrators to future proof their radar designs.

Cédric Malaquin, team lead analyst of RF activity at Yole Intelligence, part of Yole Group, says: “There were ~100M radar units manufactured in 2021 for automotive ADAS.

“We expect this volume to grow 2.5-fold by 2027, given the more demanding regulations on safety and more advanced driving automation systems hitting the road. Indeed, from the current 1-3 radar sensors per car, OEMs will move to 5 radar sensors per car as a baseline.

“Besides, there is an exciting debate on the radar processing partitioning and many developments associated. One approach is centralized radar computing that will enable OEMs to offer significantly higher performance imaging radar systems and new ADAS/AD features while simultaneously optimizing the cost of radar sensing.”

To create this unique, cost-effective new architecture, Ambarella optimized the Oculii algorithms for its CV3 AI domain controller SoC family and added specific radar signal processing acceleration.

The CV3’s industry-leading AI performance per watt offers the high compute and memory capacity needed to achieve high radar density, range and sensitivity.

Additionally, a single CV3 can efficiently provide high-performance, real-time processing for perception, low-level sensor fusion and path planning, centrally and simultaneously, within autonomous vehicles and robots.

Fermi Wang, president and CEO of Ambarella, says: “No other semiconductor and software company has advanced in-house capabilities for both radar and camera technologies, as well as AI processing.

“This expertise allowed us to create an unprecedented centralized architecture that combines our unique Oculii radar algorithms with the CV3’s industry-leading domain control performance per watt to efficiently enable new levels of AI perception, sensor fusion and path planning that will help realize the full potential of ADAS, autonomous driving and robotics.”

The data sets of competing 4D imaging radar technologies are too large to transport and process centrally. They generate multiple terabits per second of data per module, while consuming more than 20 watts of power per radar module, due to thousands of MIMO antennas used by each module to provide the high angular resolution required for 4D imaging radar.

That is multiplied across the six or more radar modules required to cover a vehicle, making central processing impractical for other radar technologies, which must process radar data across thousands of antennas.

By applying AI software to dynamically adapt the radar waveforms generated with existing monolithic microwave integrated circuit (MMIC) devices, and using AI sparsification to create virtual antennas, Oculii technology reduces the antenna array for each processor-less MMIC radar head in this new architecture to 6 transmit x 8 receive.

Overall, the number of MMICs is drastically reduced, while achieving an extremely high 0.5 degrees of joint azimuth and elevation angular resolution.

Additionally, Ambarella’s centralized architecture consumes significantly less power, at the maximum duty cycle, and reduces the bandwidth for data transport by 6x, while eliminating the need for pre-filtered, edge processing and its resulting loss in sensor information.

This cost-effective, software-defined centralized architecture also enables dynamic allocation of the CV3’s processing resources, based on real-time conditions, both between sensor types and among sensors of the same type.

For example, in extreme rainy conditions that diminish long-range camera data, the CV3 can shift some of its resources to improve radar inputs. Likewise, if it is raining while driving on a highway, the CV3 can focus on data coming from front-facing radar sensors to further extend the vehicle’s detection range while providing faster reaction times.

This can’t be done with an edge-based architecture, where the radar data is being processed at each module, and where processing capacity is specified for worst-case scenarios and often goes underutilized, says Ambarella.