US drivers may be treating their partially-autonomous vehicles as fully self-driving, according to a new study.

Research shows that owners of advanced systems, such as General Motors’ Super Cruise or Tesla’s Autopilot, believe that these technologies have more capabilities than they actually do.

Academics are concerned that such findings could lead to more unnecessary collisions in the future.

The State of Partially Autonomous Vehicle Technology

While self-driving vehicles have been around for at least ten years by some measures, they are still not ready for the mainstream. Many companies, including Tesla and GM, have software that can quite happily drive someone under controlled conditions to their destination. But real-world situations keep getting in the way.

The main problem is “corner cases”. These are rare events that fall outside of statistical norms and can trick vehicles’ software.

While a Tesla might successfully complete 99 percent of journeys autonomously without any driver input, there is still 1 percent of trips that could result in owners going to a car accident lawyer, and that’s just too high.

Incident rates need to be around 0.0001 percent or lower to convince regulators that such systems are safe.

Getting there is going to be a challenge, though. By definition, corner cases don’t have a well-defined distribution of their own.

Therefore, it is hard to see how any neural net-based machine learning system could help. The software simply doesn’t have the ability to use common sense when it encounters a tricky situation.

It has to make judgments based on prior learning. And when the training set is thin, as it is with corner solutions, accidents happen.

Even so, that hasn’t stopped industry leaders from making remarkable claims. In 2017, for instance, Tesla CEO Elon Musk said that fully autonomous technology would arrive in 2018. It’s now 2022 and we’re still waiting.

And given the reasoning outlined above, we will probably still be waiting in ten years’ time. That’s because the technology simply cannot work the way that manufacturers intend it to under the same paradigm. They need to make a shift.

What Did the New Research Say?

The new research was a product of the Insurance Institute for Highway Safety (IIHS), a group dedicated to making vehicles safer. Their survey found that users of autonomous technologies were much more likely to perform non-driving-related activities while piloting their vehicles.

In simple terms, that means that they were far more likely to eat or text while driving than people driving traditional vehicles.

The study’s design was robust and well-funded. Investigators explored the driving habits of more than 600 Autopilot and Supercruise users.

According to the results, 42 percent of Autopilot users, and 53 percent of Super Cruise users were confident in treating their vehicles as fully self-driving, even though the manufacturers of these vehicles make no such claims.

The reason for this behavior comes down to how these systems perform in the real world. Most of the time, they successfully take their owners to their desired destination.

They’re able to match the speed of the car in front, park in tight spaces, and drive around corners, following satellite navigation. This performance gives drivers a false sense of security. For many, falling asleep at the wheel worked 30 times, so what’s the harm in the 31st?

Unfortunately, statistics don’t work like that. Pretending to pilot an autonomous vehicle is dangerous and increases the risk of crashes.

Most drivers are early adopters and have a poor understanding of how these systems actually work. Dealers are not spending enough time explaining to customers what partially autonomous really means, and manufacturers are using misleading branding to describe their systems.

The term “Autopilot” for instance, sounds like something that can drive itself to most consumers, even if that’s not what it means in the aerospace sector.

Crash Investigations

The new study comes at an interesting time for car makers who implement partially autonomous systems in their vehicles. The NHTSA is currently launching an investigation into Tesla Autopilot crashes to see whether the system poses a threat to the public.

Dozens of the company’s vehicles have been involved in alleged incidents where autonomous technology was in use.

Other companies, such as GM, are proposing solutions. They believe that greater driver engagement and education will improve the situation. If they can educate their customers better, regulatory and public perceptions will improve.

With that said, the carmaker is still making bold claims about its systems. It says that they work on more than 400,000 miles of North American highways, with that figure increasing all the time.

And perhaps this is the model for the future. While automakers can’t provide a general solution for all corner cases, they can train vehicles on sections of American roads and prepare them for them. Over time, learning occurs and cars get better at navigating familiar territory.

If a driver drives along a new road, systems simply switch back to manual control for safety reasons. Highway driving seems particularly amenable to these technologies, but city driving is less so.

Rebranding of Autonomous Features

Consumers don’t understand the difference between terms like “autopilot”, “autonomous”, and “automatic”. For most, they just mean that the machine does everything for you.

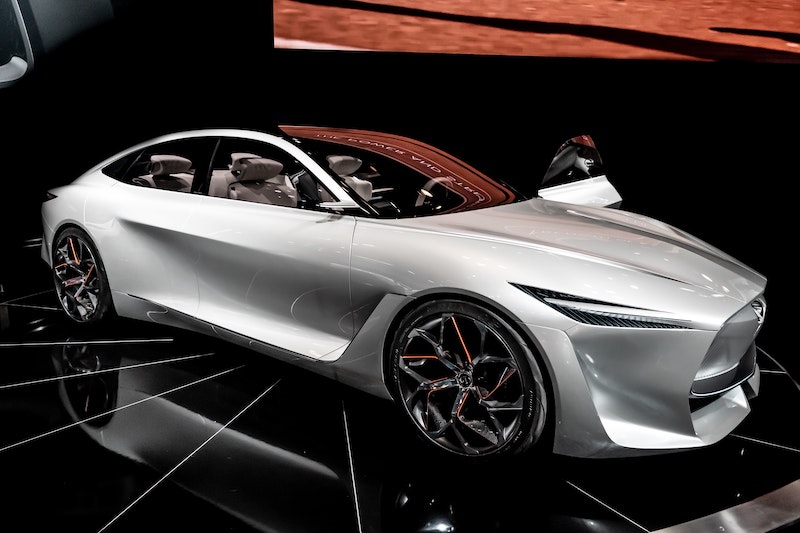

Because of this, automakers need a rebranding (at least until they build systems that really can do everything for drivers). ProPILOT Assist is a good example of this. It actively include the word “assist” in its name. And that it something that most drivers can understand. A system that assists you is one that helps you. It doesn’t do everything for you.

Nissan’s system also makes it clear that drivers need to have their hands on the wheel to take over at any moment. The Japanese car company knows that its technology isn’t perfect. It’s just a system that reduces the need to use the steering wheel and pedals. It doesn’t eliminate it.

Car companies should be careful with autonomous technology. While it has the potential to transform the world, regulators will crush it if they think it’s too unsafe.

Main image by Tom Strecker on Unsplash