“How do we build robots that can optimally explore space?” is the core question behind Dr Frances Zhu’s research at the University of Hawai’i. One part of the answer is, “with motion capture”.

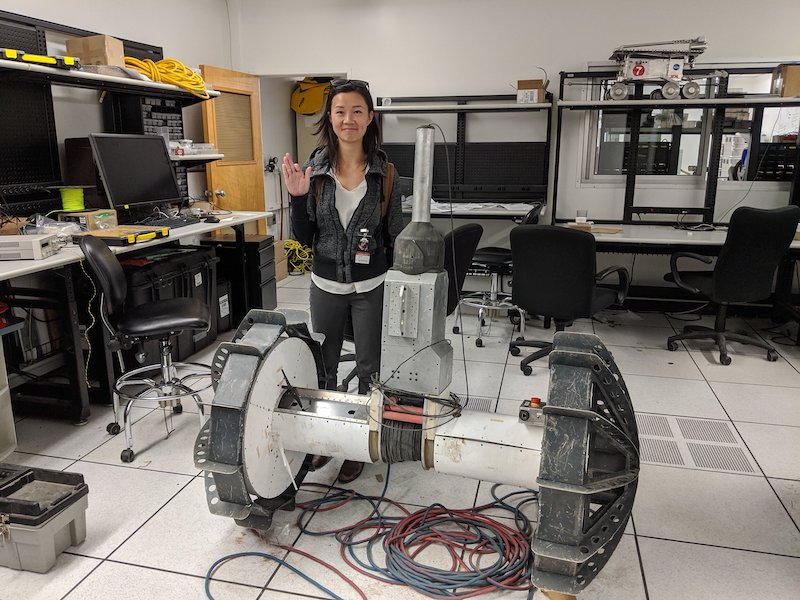

“It is my hope that my research contributes to the way extraterrestrial robots move and make decisions on other planets,” explains Zhu (main image), an assistant researcher and deputy director at the University’s Hawai‘i Institute of Geophysics and Planetology.

That research is in its early stages, but NASA has seen the value in it and awarded Zhu an EPSCoR grant by the name “Autonomous Rover Operations for Planetary Surface Exploration using Machine Learning Algorithms”.

Specifically, Zhu’s project focuses on robots that explore extreme terrain on lunar and planetary surfaces. “There are a few questions that I want to answer,” she says.

“For example, the lunar South Pole has a lot of water ice, we just don’t know exactly how much. It’s important for when we go to the moon and establish some kind of habitat; we need to have a large water reserve.

“So imagine a little rover just landing, waking up and measuring how much ice is right beneath it, and then figuring out the same thing at the next location. And imagine doing that sequentially, iteratively, until you map out the entire ice distribution of a crater.

“Another question, which is explicitly using the Vicon system, is identifying what the dynamics model of a rover is in terrain that you’ve never seen before. So, a rover wakes up on a planetary surface, but it has no idea what it’s like. It’s unlike any kind of Earth terrain.

“Can you take measurements of its states, like position and orientation, and then over time come up with a model that predicts the robot’s next state? This is a method called system identification. We’re going to use the Vicon motion data to conduct this research.”

Unknown terrain

The challenges that these questions present are, of course, manifold. “Humans haven’t been to the surface of Mars,” says Zhu. “Only a few humans have been to the surface of the moon, and they’ve brought moon rocks back, but just from certain locations. So that terrain isn’t well characterized.”

Zhu notes that even here on Earth, terrain underfoot can vary hugely. Humans intuitively deal with different conditions in a way that feels like second nature but is, by the standards of most robots, highly advanced.

“The question, then, is if you don’t have human intuition, can you formalize these environmental inputs, and then create some kind of control in the way that you locomote, all in an algorithm without human intuition and intelligence in the loop?” says Zhu.

How motion capture can boost robot intelligence

Zhu hopes that the work she’s doing with her Vicon system can inform future rover designs.

“Motion capture is the center of everything that I do,” she says. “A little bit of context: right now, NASA’s rover missions have extremely limited motion prediction based on a robot’s dynamics model.

“It primarily has something called open-loop control, where you think of the path that you’re going to take and you just implement that control without highlevel feedback.

“There’s some low-level feedback where there’s a little bit of course correction, but there’s not really that much prediction for something like a slippage. There’s not any high level of intelligence.

“My dream is to someday use this data and these modeling techniques to upload an autonomy algorithm to future rover missions.

“This is going to be especially important for missions that are farther away than the moon and Mars, because that communication delay is going to prohibit any kind of real-time feedback.”

To gather the data she needs, Zhu has procured six Vantage V5s and is running her setup using Tracker. Zhu previously worked with a competitor system, but says that she needed technology that had been subjected to greater levels of testing for this project.

“There’s this level of academic rigor to Vicon technology that was really compelling for me, and which is what convinced me to buy this system versus one of the competitors,” she says.

“Vicon will enable me to take my rover outside and take precise vehicle state for my dynamic modeling work. We’re going to augment the motion data with an IMU, which will give angular velocity and acceleration data, as well as a certain amount of tilt data.

“We have a GPS on board for outdoor tests. We have stereo cameras to hopefully get some visual odometry.”

Before Zhu even begins extrapolating her findings to extraterrestrial environments, however, outdoor capture presents its own set of challenges.

“The terrain might be quite uneven and extreme when it comes to the slope or the iciness. Because the research is based around characterizing ice I imagine that, as experiment operators, we’re also going to have to set up this camera system on an icy slope.

“The cameras will need to be capable enough that if we are just stuck with putting cameras far away from each other then we can cover a large enough capture volume with enough precision. We also have to make sure that we maintain constant tracking through various lighting conditions. And we’re not sure how the ice might affect the reflection, or whether it will create more noise.”

All the different robots

For now, these are problems for the future. That doesn’t stop Zhu from jumping to potential future research ideas when the subject of markerless capture and Vicon’s recent acquisition of Contemplas comes up, however.

“I’m just thinking about the applications for a lunar base,” she muses. “It might make sense to set up these markerless cameras so that you are always monitoring the motion of the different robots that you’re operating.

“That way, you don’t have to force all of the sensing to be on the robot itself and could instead have some kind of environment-sensing that’s external to the machine.

“It would be very cool to also work with amphibious vehicles,” Zhu says, jumping delightedly back to Earth and the variety her field offers her.

“So going from land to water, or land to air. Just all the different robots, all their fun shapes and types of movement.”