From the iPhone to the Mac, iPad, iPod and the Apple Watch, Apple excels, and continues to do so, in hardware innovation and software development.

However, not much is known about Apple’s plan with Artificial Intelligence (AI) and how it’s used in Apple products, or apps in general.

In its recent WWDC performance, Apple seems to have taken a quieter, yet smarter approach by adding several features and updates with machine learning at their core.

The idea behind incorporating machine learning and AI into Apple products is to build amazing experiences for its consumers so they can do what they never imagined they could.

Apple engineers and researchers are collaborating to integrate software and hardware across its devices and improve user experiences while protecting their data. In this way, they are creating the world’s best storage, computing and analytics tools capable of taking on the most challenging problems in AI and machine learning.

Let’s recap some of the main AI solutions that Apple uses in its devices to make user experiences not only amazing, but smart and intelligent.

How Apple Implements AI in Its Devices

Sprinkled throughout iOS, macOS, iPadOS and watchOS are a number of features and updates that have AI and machine learning at their heart. Here are a few of the prominent ones you can find:

- FaceID

- Hand washing

- Handwriting recognition

- Native sleep tracking

- App Library suggestions

- Translate app

- Sound recognition

Let’s look at how Apple uses AI in each of these functionalities.

FaceID

FaceID technology uses facial recognition patterns to generate a specific model for your face. This way, it’s easier to identify your face so you can unlock or perform functions on your device.

You can also find FaceID in the camera connected to HomeKit. This is where it saves facial recognition models and patterns in your devices to ensure that the camera can identify someone in front of your home when they ring the doorbell.

FaceID in HomeKit also allows you to use photos tagged on your device to identify people and even announce them by name.

Handwashing

Handwashing is an AI feature in Apple Watch which can detect when you’re washing your hands.

The feature helps your Watch detect handwashing motions and sounds, which starts a 20-second countdown timer to ensure that you wash your hands effectively. Your Watch can also notify you if you’ve not washed your hands within minutes of returning home.

Handwriting recognition

If you have an iPad, you get handwriting recognition with your Apple Pencil, which recognizes images and identifies English and Chinese characters.

The machine learning model can identify and translate strokes into ASCii source characters from large amounts of typing data. Any text you write can be pasted into a document or searched online and converted into text instead of handwritten copy.

Native sleep tracking

Apple announced the introduction of sleep tracking with watchOS 7, which takes a holistic approach to sleep by giving users valuable tools that help them get quality sleep.

The sleep tracking feature detects micro-movements using the watch’s accelerometer to capture when you sleep and how much sleep you get each night. This way, you get to see a visual representation of your periods of wakefulness and sleep, and view your sleep patterns on a weekly basis.

Through machine learning, the sleep tracking feature can classify all your movements, including when you are dancing, and detect when you’re asleep or awake.

App library suggestions

In the new App Library layout on iOS, you’ll find a folder that uses on-device intelligence to display suggestions of apps you’re likely to need next.

While this may be a small AI-powered feature, it’s especially useful if you often use particular apps every day, as it gets smarter the more you use your device.

Translate app

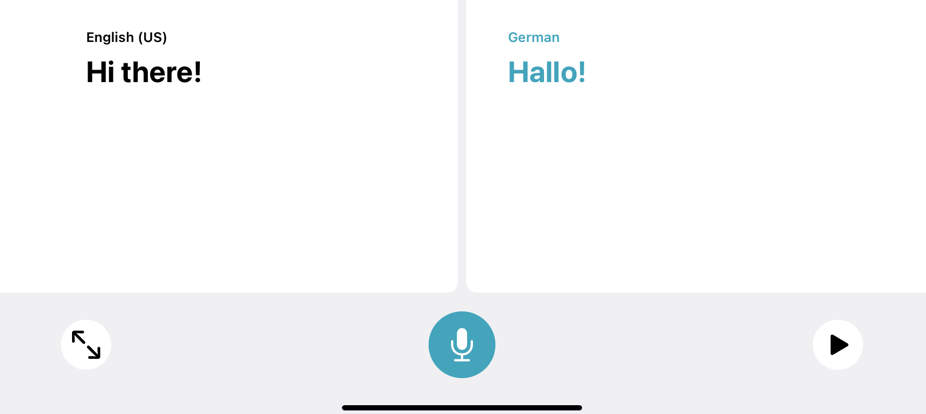

Translate is a new feature in iOS 14 that makes it easy to translate words and phrases on your iPhone in up to 11 languages. You can get definitions for translated words, have conversations across languages, save translations for quick access, and download languages for use offline.

Thanks to on-device machine learning, the app works completely offline, detects the languages being spoken, and translates your conversations in real-time. Be sure to update your OS to get all these and other useful changes.

Sound recognition

While sound recognition wasn’t mentioned during WWDC, the iOS 14 feature, which was first spotted by a Reddit user, can benefit people with hearing disabilities. The iPhone can be set to listen for different sounds including a crying baby, barking dog, siren, doorbell, smoke detector alarm and more.

All these and other AI and machine learning updates are just some examples of Apple apps and AI, which show the company’s interest in delivering small conveniences to its users.

There are absences from this list – like Siri (Apple’s digital assistant) which is AI-heavy and has a myriad of uses, but what we’ve listed here are the best examples of Apple’s approach to its users’ day-to-day needs.

Wrapping Up

Apple is the leading buyer of global AI companies, with the sole aim of improving the machine learning and AI capabilities of its products and services.

While investing in AI may not boost Apple’s near-term revenue growth, it will go a long way towards strengthening its hardware devices and maintaining loyalty to its services ecosystem.

For Apple, AI is a strong tactic that plays to its deeply rooted reputation for delivering software that simply works, while avoiding the iterative updates that are tech-for-tech’s-sake.