A team of scientists has developed a new way of teaching surgery to a robot that they say is far more efficient than other methods.

In a paper entitled Semi-Supervised Representation Learning from Surgical Videos, the team at Cornell University calls its algorithm Motion2Vec.

The team – which is backed by Intel’s AI Labs, Google Brain, and UC Berkeley – says Motion2Vec learns from video observations “by minimizing a metric learning loss”.

They add: “Results give 85.5 percent segmentation accuracy on average suggesting performance improvement over several state-of-the-art baselines, while kinematic pose imitation gives 0.94 centimeter error in position per observation on the test set.”

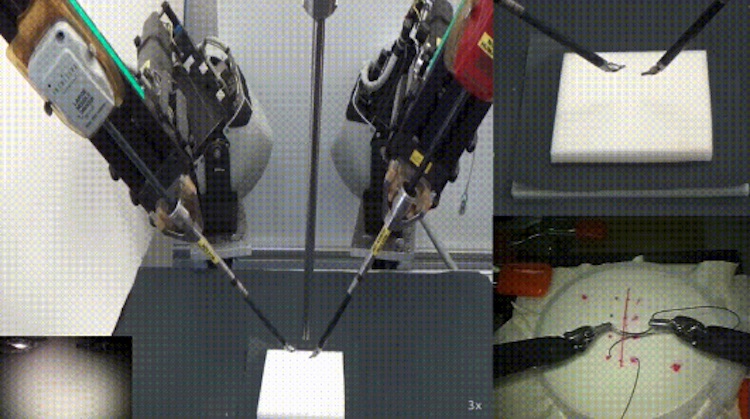

They says that this means the AI model is capable of learning tasks associated with robotic surgery, such as suturing, needle-passing, needle insertion, and tying knots – trained using only publicly available surgery videos collectively called the JIGSAWS dataset.

Their results show that robots used in surgery can be improved by feeding them expert demonstration videos to teach new robotic manipulation skills.

The authors of the paper are Ajay Kumar Tanwani, Pierre Sermanet, Andy Yan, Raghav Anand, Mariano Phielipp, Ken Goldberg.