Nvidia is opening a new robotics research lab in Seattle near the University of Washington campus led by Professor Dieter Fox, senior director of robotics research at Nvidia and professor in the UW Paul G. Allen School of Computer Science and Engineering. (See video below.)

The charter of the lab is to drive breakthrough robotics research to enable the next generation of robots that perform complex manipulation tasks to safely work alongside humans and transform industries such as manufacturing, logistics, healthcare, and more.

Professor Dieter Fox says: “In the past, robotics research has focused on small, independent projects rather than fully integrated systems.

“We’re bringing together a collaborative, interdisciplinary team of experts in robot control and perception, computer vision, human-robot interaction, and deep learning.”

Close to 50 research scientists, faculty visitors, and student interns will perform foundational research in these areas. To ensure the research stays relevant to real-world robotics problems, the lab will investigate its work in the context of large scale, realistic scenarios for interactive manipulation.

What’s cooking in the robotics research lab?

The first of these challenge scenarios is a real-life kitchen where a mobile manipulator solves a variety of tasks, ranging from retrieving objects from cabinets to learning how to clean the dining table to help a person cook a meal.

At an Open House event on January 11, the Seattle lab demonstrated its first manipulation system in their kitchen. The mobile manipulator integrates state-of-the-art techniques to detect and track objects, keep track of the state of doors and drawers in the kitchen, and open/close them to get access to objects for manipulation.

These approaches can be applied in arbitrary environments, only requiring 3D models of relevant objects and cabinets.

Building on Nvidia’s expertise in physics-based, photorealistic simulation, the robot uses deep learning to detect specific objects solely based on its own simulation, not requiring any tedious manual data labeling.

Nvidia’s highly parallelized GPU processing enables the robot to keep track of its environment in real-time, using sensor feedback for accurate manipulation and to quickly adapt to changes in the environment.

The robot uses the Nvidia Jetson platform for navigation and performs real-time inference for processing and manipulation on Nvidia Titan GPUs. The deep learning-based perception system was trained using the cuDNN-accelerated PyTorch deep learning framework.

What makes the system unique is that it integrates a suite of cutting-edge technologies developed by the lab researchers. These technologies working together enable detection of objects, tracking the position of doors and drawers, and generate control commands so that the robot can grasp and move objects from one place to another.

The system is comprised and built on the following technologies:

- Dense Articulated Real-Time Tracking (DART): DART, which was first developed in Fox’s UW robotics lab, uses depth cameras to keep track of a robot’s environment. It is a general framework for tracking rigid objects, such as coffee mugs and cereal boxes, and articulated objects often encountered in indoor environments, like furniture and tools, as well as human and robot bodies including hands and manipulators.

- Pose-CNN: 6D Object Pose Estimation: Detecting the 6D pose and orientation of known objects is a crucial capability for robots that pick up and move objects in an environment. This problem is challenging due to changing lighting conditions and complex scenes caused by clutter and occlusions between objects. Pose-CNNisa deep neural network trained to detect objects using regular cameras.

- Riemannian Motion Policies (RMPs) for Reactive Manipulator Control: RMPs are a new mathematical framework that consistently combines a library of simple actions into complex behavior. RMPs allow the team to efficiently program fast, reactive controllers that use the detection and tracking information from Pose -CNN and DART to safely interact with objects and humans in dynamic environments.

- Physics-based Photorealistic Simulation: Nvidia’s Isaac Sim tool enables the generation of realistic simulation environments that model the visual properties of objects as well as the forces and contacts between objects and manipulators. A simulated version of the kitchen is used to test the manipulation system and train the object detection network underlying Pose-CNN. If done on a real robot this training and development process would be an expensive and time-consuming process. Once simulation models of objects and the environment are available, training and testing can be done far more efficiently, saving precious development time.

Fox says: “We really feel that the time is right to develop the next generation of robots. By pulling together recent advances in perception, control, learning, and simulation, we can help the research community solve some of the world’s greatest challenges.”

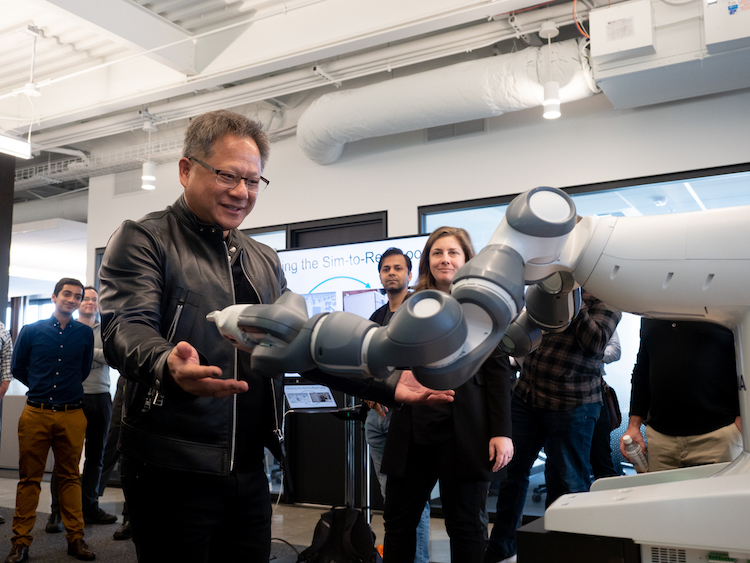

(Main picture: Jensen Huang, CEO of Nvidia, pictured at the new Seattle robotics lab.)